There's a category of web work that everyone knows needs doing and almost nobody proactively does. Not because it's difficult. Not because it's expensive. But because it's invisible, unglamorous, and nobody, client or agency alike, naturally raises their hand to start the conversation.

We're talking about the maintenance layer. Security headers. Outdated dependencies. Declining performance scores. Missing schema. The kind of thing that sits in the background of a live website, not breaking anything visibly, until one day it does. Or until a client's site gets flagged in a security audit. Or until their search rankings start sliding and nobody can immediately point to why.

The build gets the budget meeting. The launch gets the champagne. The maintenance work gets the awkward silence.

In 2026, that's starting to change, and AI is a significant part of why.

Why maintenance work is hard to sell

It's worth being honest about why this problem exists, because it isn't anyone's fault exactly. It's structural.

Clients engage agencies when they have a problem they can see: the site looks dated, they need a new feature, the rebrand is happening. The relationship is project-shaped. There's a brief, a proposal, a delivery, a sign-off. Ongoing maintenance doesn't fit that shape. It's continuous rather than episodic, and its value is largely preventative, which means the benefit is often invisible. You don't see the security incident that didn't happen.

From the agency side, the challenge is similar. You might be fully aware that a client's jQuery is three versions out of date, or that their performance score has been falling since they added a new analytics tool. But turning that awareness into a client conversation requires effort: you need to assess the issue, understand the fix, estimate the time, and write it up in language a non-technical client can act on. For a single client that's manageable. Across a portfolio of twenty or thirty sites, it's a significant overhead, and one that often just doesn't happen.

The result is a gap. Real work that genuinely needs doing, clients who would benefit from it being done, and a communication problem sitting between the two.

What AI changes about this

The emergence of AI-assisted tooling doesn't change the underlying reality of website maintenance: security headers still need setting, dependencies still need updating, performance still degrades over time. What it changes is the cost of surfacing that work.

The part that takes time isn't knowing what's wrong. Any decent monitoring setup will tell you that. The part that takes time is translating that technical finding into something a client can understand and agree to pay for. That translation (from scan output to plain-English estimate) is exactly where AI adds genuine value.

Modern monitoring tools can now take a set of audit findings and produce a structured client estimate automatically: what's wrong, what it means in plain English, how to fix it on the client's specific platform, and a realistic time estimate that accounts for testing and deployment. Not a generic checklist. A document specific to that site, that platform, those findings.

That changes the economics of the conversation. If producing a maintenance estimate takes two minutes rather than twenty, it becomes viable to do it proactively for every client, every time a new issue surfaces. The barrier to starting the conversation drops significantly.

The kinds of work this surfaces

To make this concrete, here's what typically sits in the maintenance layer across a client portfolio, and why it rarely gets raised.

Security housekeeping

Missing HTTP security headers (HSTS, Content Security Policy, X-Frame-Options, X-Content-Type-Options) are extraordinarily common on live websites, including ones built by good agencies. They're often missed during the original build because they're not visible, don't break anything immediately, and aren't always on the standard QA checklist. Adding them typically takes a couple of hours including testing and deployment, and the fix is largely the same across most WordPress sites. It's not urgent work until, suddenly, it is.

Dependency updates

jQuery 3.4 has two documented XSS vulnerabilities in the National Vulnerability Database. It's been superseded for years. It's also still running on a significant proportion of live websites, including some built recently, because the theme or plugin that included it hasn't been updated. The same is true of WordPress core versions, outdated plugins, and various JavaScript libraries. The update is usually straightforward. The conversation to justify doing it is the hard part.

Performance degradation

Performance scores don't stay static. A site that scored 90 at launch might be sitting at 68 two years later because marketing added a video embed, someone installed an analytics tool that loads synchronously, and the hero image got replaced with an uncompressed version. None of these are dramatic events. Together they compound. A monitoring tool with trend data can show exactly when a score started declining and correlate it with changes, which makes the case for a remediation project significantly easier.

SEO and AI discoverability

This is increasingly a maintenance concern rather than a build concern. Schema markup needs keeping up to date as content evolves. Title tags drift. AI crawlers get inadvertently blocked by robots.txt changes. The emerging standards around AI discoverability, including llms.txt, structured data quality, and semantic HTML, are moving fast enough that a site optimised for AI search six months ago may no longer be. Clients who care about visibility in AI-powered search results need ongoing attention here, not a one-off audit.

The account management conversation

Here's what this looks like in practice for an account manager at a digital agency.

A client's site gets flagged: security score of 62, three failed checks, two warnings. Previously, turning that into a proposal meant opening the scan results, understanding each finding, researching the fix, estimating the time, and writing it up. Maybe 30 to 45 minutes if you're efficient.

With AI-assisted reporting, that process produces a structured document automatically: executive summary, per-issue breakdown, fix instructions specific to the client's platform, time estimates, priority rating. The account manager reviews it, adjusts anything that doesn't fit the specific situation, and sends it. The conversation that might not have happened at all now takes ten minutes.

The client receives something they can understand and act on. Not "your X-Frame-Options header is missing" but "your site is currently vulnerable to a type of attack called clickjacking, where your pages could be secretly embedded inside another website. Adding the protection takes about 30 minutes and we'd recommend doing it as part of a broader security tidy-up that would take around three hours in total."

That's a proposal a client can say yes to. It's also genuinely in their interest, which is the important part.

Recurring revenue versus one-off projects

The broader shift this points to is from project-based agency relationships to ongoing ones. That transition has been discussed in the agency world for years, but it's hard to operationalise without a clear mechanism for generating regular, justified work.

Website maintenance done properly isn't a retainer for retainer's sake. It's a stream of small, real, necessary interventions: the dependency update, the security header, the image that needs compressing, the schema block that needs a new property. None of these individually justify a long brief and a formal proposal. Together, batched and surfaced intelligently, they represent a steady volume of work that keeps sites healthy and agencies meaningfully engaged with their clients between projects.

The AI component here isn't doing the work. It's making the communication around the work efficient enough that it actually happens. Which is, arguably, the thing that's been missing.

Google's own Core Web Vitals guidance is unambiguous on the point that site health is an ongoing concern rather than a one-time optimisation. The agencies building processes around continuous monitoring and regular client communication are better positioned to act on that than those waiting for clients to raise issues first.

A note for clients reading this

If you've landed here as a client rather than an agency: the maintenance work your agency might raise with you is real work that makes a real difference. A security header isn't exciting. Neither is a seatbelt. The sites that get compromised, that lose ground in search, or that start loading slowly for visitors are overwhelmingly the ones where the unglamorous ongoing work didn't happen.

An agency that proactively surfaces these issues and presents them clearly is doing a better job than one that waits to be asked. The estimate they share is a starting point for a conversation, but it's worth having.

Getting started

If you run a web agency and manage sites for clients, the foundation for all of this is visibility across your portfolio, and knowing which sites have security issues, which have declining performance, which have SEO problems that have emerged since the last build.

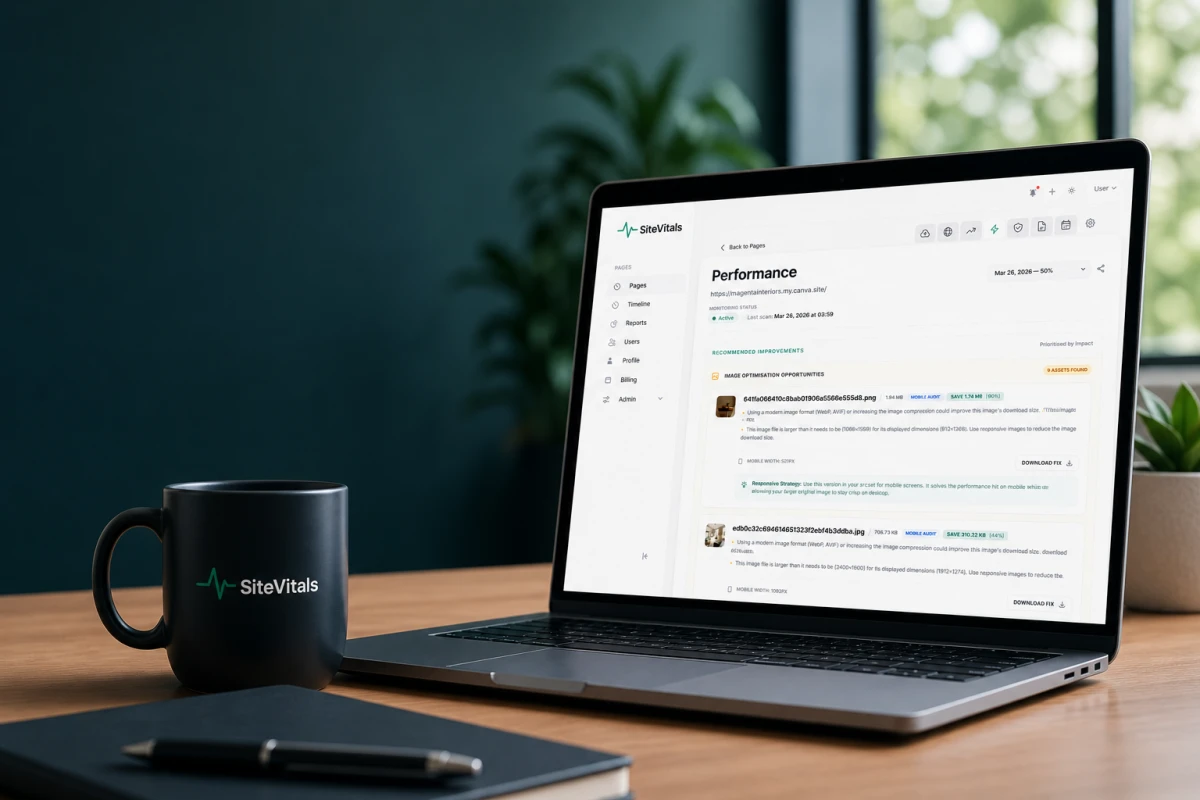

SiteVitals monitors websites across uptime, performance, security, SEO, integrity, domain, and SSL, and surfaces issues with enough context to turn them into client conversations. The AI estimate feature generates client-ready proposals directly from scan data, specific to the platform, scoped to the actual findings, with realistic time estimates built in. You can read more about how SiteVitals works for agencies, or start a free trial and run your first estimate today.

Articles written collaboratively by the SiteVitals team.