Your nightly backup script runs at 2am. It has done for years. It's in crontab, it works, nobody thinks about it. Until the morning you discover it stopped running three weeks ago and the database you need to restore from is three weeks stale.

The problem with scheduled tasks is that their failure mode is silence. A web page going down produces a visible error. A cron job failing produces nothing - no error page, no angry customer, no phone call. Just an absence that nobody notices until it matters.

This is true for backups, but also for queue workers, report generators, invoice processors, data syncs, cache warmers, and any other background job your infrastructure depends on. If you manage sites for clients, multiply that by every client and every server.

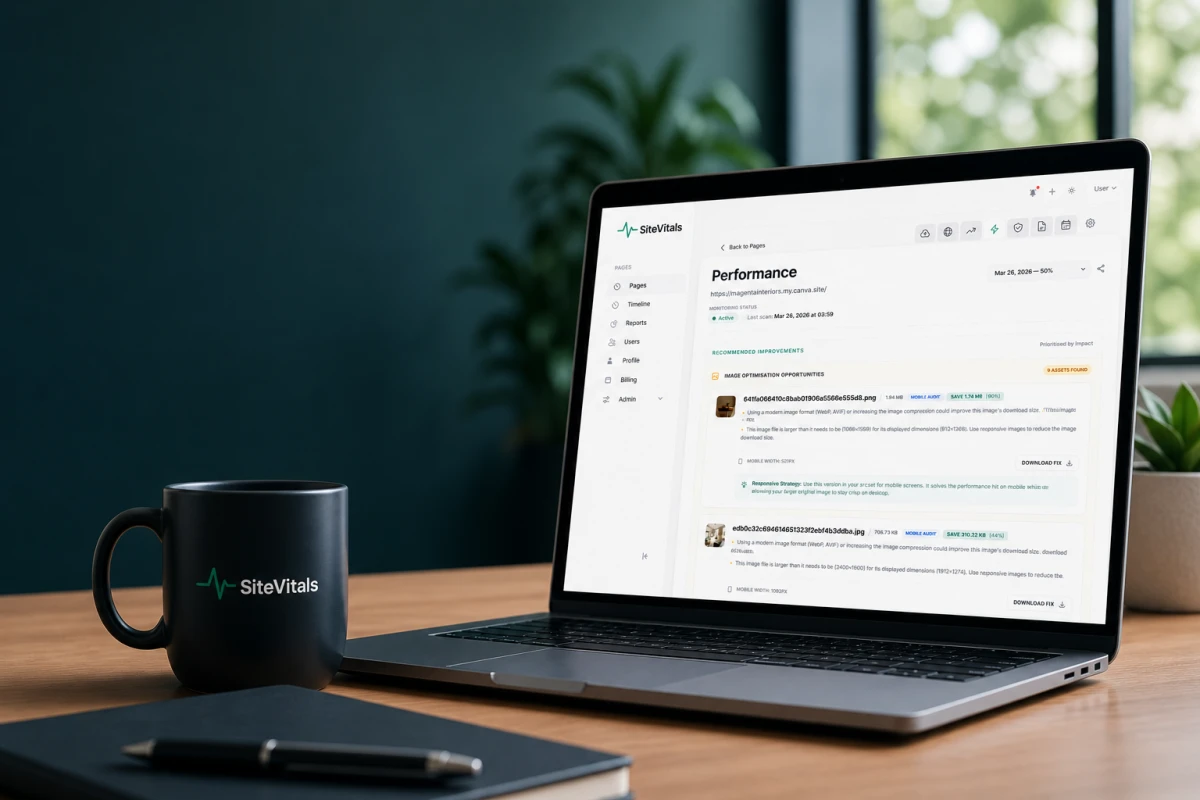

Today we're introducing Cron Job Monitoring in SiteVitals. You create a monitor, get a unique ping URL, add one line to your cron job, and SiteVitals watches for the signal. If the signal doesn't arrive on time, you get an alert. That's it. That's the whole thing.

How it works

The concept is sometimes called heartbeat monitoring. Your cron job "phones home" to SiteVitals after it runs. SiteVitals knows when the next call should arrive. If it doesn't, something is wrong.

You set up a monitor by giving it a name, telling SiteVitals how often the job runs (either a simple interval like "every 60 minutes" or a cron expression like 0 2 * * *), and setting a grace period - how long SiteVitals should wait beyond the expected time before raising the alarm. The grace period accounts for normal variation in job runtime. A backup that usually takes 8 minutes but occasionally takes 12 shouldn't wake you up at 2:13am.

Once created, you get a unique URL that looks like https://www.sitevitals.co.uk/ping/a3f8x9k2. Add a curl call to the end of your cron job, and the monitor is live.

The simplest integration: one line of curl

The most common setup takes about 30 seconds. If your crontab currently looks like this:

30 2 * * * /usr/local/bin/backup.sh

You change it to this:

30 2 * * * /usr/local/bin/backup.sh && curl -fsS -o /dev/null https://www.sitevitals.co.uk/ping/a3f8x9k2

The && is important. It means the ping only fires if the preceding command succeeds (exits with code 0). If your backup script fails, the ping never arrives, and SiteVitals knows something is wrong.

The -fsS -o /dev/null flags tell curl to fail silently without polluting your cron output, while still returning a non-zero exit code if the request itself fails.

Laravel

If you're running a Laravel scheduler, it's even cleaner. In your routes/console.php or schedule definition:

$schedule->command('your:command')->daily()->thenPing('https://www.sitevitals.co.uk/ping/a3f8x9k2');

Laravel's thenPing() fires after the command completes successfully. No shell scripting required.

PHP, Python, and PowerShell

For PHP scripts that aren't running through a framework scheduler, add this at the end of your script:

file_get_contents('https://www.sitevitals.co.uk/ping/a3f8x9k2');

For Python:

requests.get('https://www.sitevitals.co.uk/ping/a3f8x9k2')

For Windows Task Scheduler jobs using PowerShell:

Invoke-WebRequest -Uri 'https://www.sitevitals.co.uk/ping/a3f8x9k2' -UseBasicParsing | Out-Null

The ping URL accepts both GET and POST requests. A simple GET is all you need for basic monitoring.

Going deeper: duration tracking, failure reporting, and diagnostics

The one-line curl setup covers most cases, but some jobs deserve closer attention. SiteVitals supports four signal types that give you progressively more visibility into what your jobs are actually doing.

Start and finish signals for duration tracking

If you want to know how long your job takes - not just whether it ran - send a start signal before the job and a success signal after:

30 2 * * * curl -fsS -o /dev/null https://www.sitevitals.co.uk/ping/a3f8x9k2/start && /usr/local/bin/backup.sh && curl -fsS -o /dev/null https://www.sitevitals.co.uk/ping/a3f8x9k2

SiteVitals calculates the duration between the /start signal and the success signal, and records it against the ping. Over time, this builds a runtime chart on the monitor's dashboard so you can spot jobs that are gradually slowing down - often the first sign of a growing dataset or a query that needs optimising.

The start signal also enables stuck job detection. If SiteVitals receives a /start but no success or failure signal arrives within the grace period, it marks the monitor as down and alerts you. The job started but never finished - and that's usually worse than a job that didn't start at all.

Explicit failure reporting

Sometimes a job runs but fails in a way it can detect itself. A database dump that gets an access denied error, a report generator that finds no data, an API sync that receives a 500 response. For these, hit the /fail endpoint:

curl -X POST https://www.sitevitals.co.uk/ping/a3f8x9k2/fail -d "mysqldump failed: Access denied for user 'backup'@'localhost'"

A /fail signal moves the monitor to down immediately and triggers an alert - no waiting for the grace period. The POST body (the error message) is stored and visible in the ping history, so when you get the alert, you already know what went wrong.

Sending runtime metadata

You can include additional diagnostics with any ping by adding query parameters or POST fields:

curl -X POST https://www.sitevitals.co.uk/ping/a3f8x9k2 -F 'runtime=12.5' -F 'memory=29360128' -F 'exit_code=0'

runtime is the job duration in seconds (as a float). If you're already measuring this in your script, sending it here is more accurate than relying on the start/finish signal timing, which includes network latency.

memory is peak memory usage in bytes. Useful for jobs that process large datasets - you can spot memory growth before it causes an out-of-memory crash.

exit_code is the process exit code. A non-zero exit code alongside a success ping might indicate a partial failure worth investigating.

All three are optional and appear in the ping history table on the monitor's dashboard.

Log entries for long-running jobs

For jobs that run for hours - large data migrations, overnight batch processes - you can send intermediate progress updates to the /log endpoint:

curl -X POST https://www.sitevitals.co.uk/ping/a3f8x9k2/log -d "Processed 50,000 of 200,000 records"

Log entries are recorded in the ping history but don't change the monitor's status. They're there for visibility. If a long-running job goes silent halfway through, the last log entry tells you exactly where it got to.

What happens when a job misses its window

SiteVitals checks every minute. When a monitor's expected ping time passes without a signal, the status moves from up to late. No alert is sent yet - this is the grace period, and it exists because most jobs have some natural variation in when they run.

If the grace period expires and there's still no ping, the status moves to down and an alert is sent to your configured channels - email, Slack, webhook, or in-app notification. Each monitor has its own alert channels, independent of your site monitoring alerts.

When the job recovers - either by sending a success ping or by you fixing the underlying issue and the next scheduled run completing - the status moves back to up and a recovery notification is sent.

An explicit /fail signal skips the grace period entirely and moves straight to down. If your job knows it failed, there's no reason to wait.

Timeline integration

Cron monitors are standalone entities - they don't have to belong to a site. A database backup job, an email queue processor, a report generator - these often have no inherent relationship to a specific URL.

But when a cron job is related to a site - a WordPress cron that processes scheduled posts, a cache warmer that pre-renders pages, a deployment script - you can link the monitor to a site. When you do, cron failures and recoveries appear on that site's Change Intelligence timeline alongside SEO shifts, security events, content changes, and performance regressions. If your PageSpeed score dropped the same night your cache warmer stopped running, the timeline shows both events side by side.

Availability and pricing

Cron job monitoring is available on all paid plans. The number of monitors you can create depends on your plan:

| Plan | Cron monitors |

|---|---|

| Starter (£19/month) | 5 |

| Marketer (£45/month) | 15 |

| Agency (£99/month) | 30 |

| Enterprise | Custom |

Ping history retention follows the same policy as other monitoring data - 90 days on Starter, 180 on Marketer, 365 on Agency.

If you're already on a paid plan, the feature is live in your dashboard now. Head to Cron Jobs in the sidebar, create your first monitor, and you'll have a ping URL in under a minute.

If you're on the free plan and want to try it, take a look at our plans. Starter gets you cron monitoring alongside the full suite of site health checks - SEO, security, performance, integrity, and content monitoring - for £19 a month.

By Tom Freeman · Co-Founder & Lead Developer

Full-stack developer specialising in high-performance web applications and automated monitoring.